Blog

We use AI in exactly one place. Here's why.

April 9, 2026 · Keith Mangold · 8 min read

Every software company on the planet is currently answering the same question the same way. The question is "how are you using AI?" and the answer is "we are absolutely, definitely, urgently using AI in the most innovative, transformative, game-changing ways, and you should be too."

The marketing pages have all been updated. The homepage hero says "AI-powered." The feature list has a little sparkle icon next to it. The press release uses the phrase "leveraging generative AI to unlock." The product roadmap promises seven more AI features by Q3. If a SaaS company doesn't have an AI story at this exact moment, the assumption is that it's falling behind the curve.

I have a different take. It isn't "we shouldn't use AI." We do use AI, and I'll tell you exactly where. It's "we shouldn't bolt AI onto a product just to have an AI story." Those are two very different positions, and most of the software industry is currently conflating them. I think that conflation is making the products worse, not better.

The place we actually do use AI

VIBEPro uses AI in exactly one place today: reviewing the documents, certificates, and image uploads vendors send in to a market, both when they first apply and every time those documents have to be renewed.

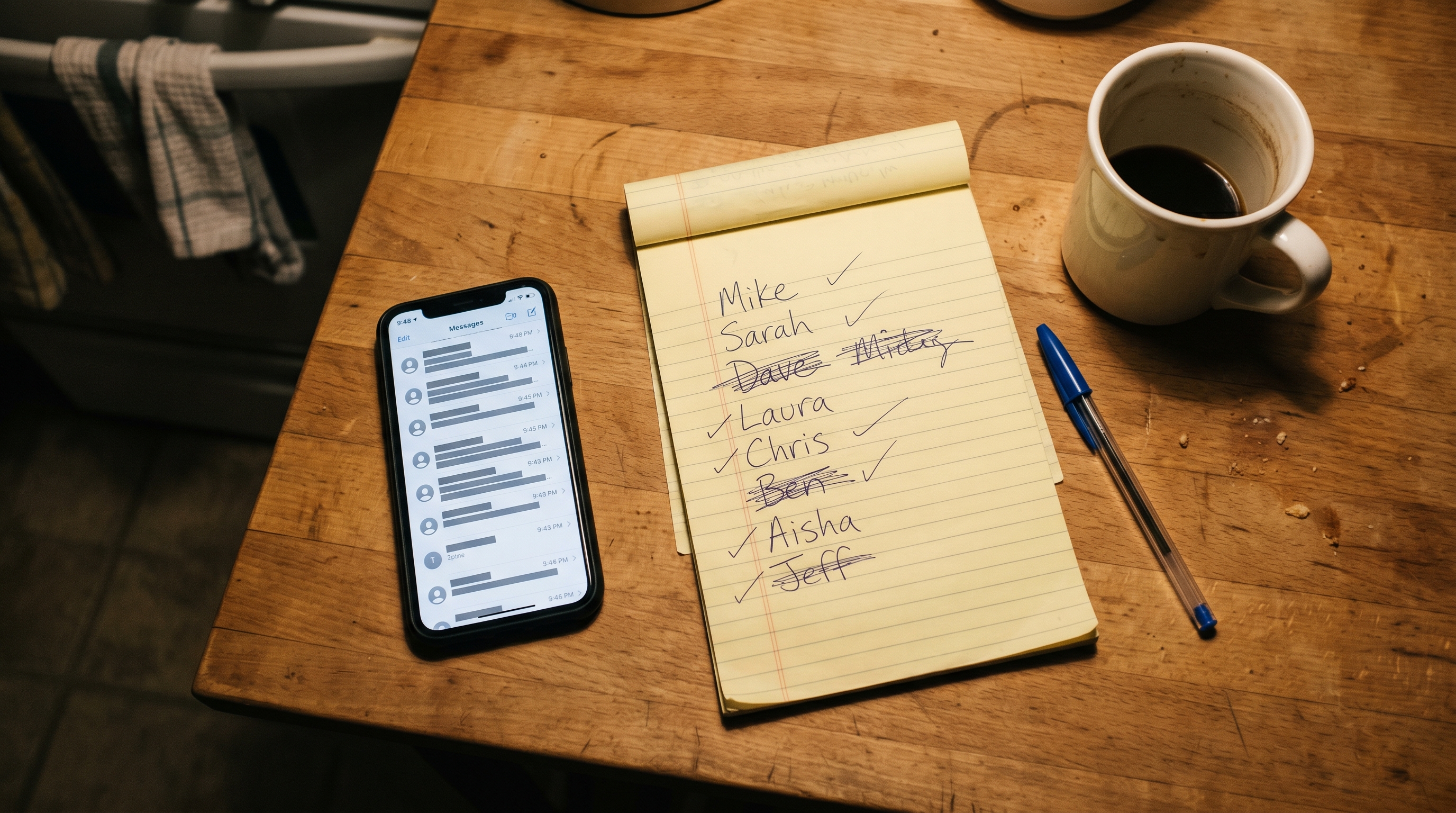

A typical vendor at a weekly farmers market has to hand in several documents before they're approved. A certificate of insurance naming the market as additional insured. A cottage food permit if they bake. A USDA organic certification if they claim it. A resale permit. Sometimes a fire marshal sign-off. Some of those arrive as clean PDFs from a broker or a government website. Some arrive as scanned JPGs. Some arrive as a phone photo of a paper certificate taken right at the vendor's kitchen table. Every one of them has an expiration date. Every one of them has the vendor's business name on it somewhere. And every one of them, historically, required a market manager to open the file, squint at the date, squint at the business name, and then type "OK" into a checklist.

Here's the part most people miss about this job: it is not one and done. An insurance certificate expires every six or twelve months. A cottage food permit has its own renewal cycle. A health department card gets reissued every year or two. Which means every certificate a vendor hands in on their first application is a certificate the market will be checking again three months later, and again three months after that, for as long as that vendor keeps showing up on Saturday. The work is at the front door, and then every quarter forever.

That shape of work is exactly the job modern document models are good at. When a vendor uploads an insurance certificate, whether it arrives as a PDF, a scan, or a phone snapshot, VIBEPro reads it and tries to answer a short list of concrete questions. Is this actually an insurance certificate, or did the vendor upload a phone bill by mistake? When does it expire? Is the expiration in the future? Does the named insured match the vendor's business? Is the market listed as additional insured where it's supposed to be? If the answers look clean, the document is marked validated and the manager never has to open it. If anything is off, say a missing additional-insured endorsement, an expiration last month, or a business name that doesn't match, the manager gets a flag with the specific reason and the original file to review.

The reason AI was the right tool for this job isn't that AI is a buzzword. It's that the job has three specific characteristics:

- The inputs are unstructured. Every insurance carrier formats their certificates differently, so you can't write a deterministic parser once and expect it to keep working next month.

- The task is bounded and verifiable. The answer is either "this looks right" or "this specific thing is wrong." Not a judgment call, not a matter of taste, just a concrete yes-or-no on a finite list of questions.

- The cost of a missed edge case is low. The manager always sees the flag. We never auto-reject a vendor. The AI is a pre-screener that removes tedium, not a gate that makes decisions.

When a job has all three of those characteristics, AI isn't a gimmick. It's the right tool. And when it's the right tool, we use it without feeling the need to put a sparkle icon on the marketing page.

Where we don't use AI, and why not

Now the more interesting part. Here are seven places, off the top of my head, where a VIBEPro competitor could plausibly bolt AI onto their marketing page tomorrow morning. I've thought about most of them. None of them are in the product right now. Each one has a reason.

1. Optimal booth-map layout

The idea: feed the booth map, the vendor list, historical foot-traffic data, and a set of constraints (don't put two tomato farmers next to each other, keep the bread vendor away from the soap vendor, give the coffee cart the corner) into a model and have it suggest an optimized layout every week.

Why it's not built: because the market managers I've watched do this work already know exactly where the bread vendor wants to be, why the soap vendor complains when he's downwind of the kettle corn, and why the new hot sauce vendor needs to be next to the Thai food truck (they cross-sell). A model can't know any of that. A drag-and-drop booth map that remembers last week's layout and flags conflicts before Saturday is genuinely more useful than a model that "optimizes" a set of constraints the manager never encoded.

2. Application triage and jury scoring

The idea: when a new vendor applies, have a model read the application, scrape their website, analyze their product photos, and hand the manager a predicted fit score.

Why it's not built: for every market I've talked to, this isn't the bottleneck. The bottleneck is collecting the application in the first place, and then actually remembering to review it before Saturday. Those are process problems, not intelligence problems. A better reminder, a better review queue, and saved-response templates for the three most common rejection reasons are more useful, and faster to ship, than a fit score that a manager would second-guess anyway.

3. Attendance and weather prediction

The idea: train a model on historical attendance data, weather forecasts, calendar events, and holiday proximity, and predict how many vendors will actually show up on a given Saturday. Flag likely no-shows in advance.

Why it's not built: I want to know it actually moves the needle before I build it. A market manager texting sixty vendors every Friday already knows who's flaky. The bigger opportunity is making that check-in not happen one vendor at a time, and that's a workflow problem, not a prediction problem. Once the check-in is automated, the manager ends up with the same information the model would have produced, without the model.

4. Sales forecasting and fee optimization

The idea: use historical sales data to forecast next month's revenue or recommend dynamic fee adjustments. "Based on the data, you should charge artisan vendors 8% sales-based fees instead of 6%."

Why it's not built: markets set fee rules for community reasons, not revenue-maximization reasons. A board meets, they argue, they decide. The decision is social, not mathematical. A model that suggests raising fees is going to be ignored, and should be ignored, because the model doesn't know the board already argued about the same thing for an hour last month. That's not a problem I can solve with more data; it's a problem I shouldn't solve at all.

5. Vendor clustering and pairing

The idea: group vendors into clusters based on product type, foot-traffic pattern, and historical co-attendance, and suggest which vendor types pair well next to each other on the map.

Why it's not built: honestly, this one I actually like. Clustering is a textbook machine-learning problem, and the answer might genuinely surprise a manager who has been laying out the same market the same way for ten years. But it's built on data nobody has yet. We've run markets for just over a year, which isn't nearly enough historical co-attendance to cluster anything meaningfully. This one stays on the list. Ask me again in a year.

6. Natural-language vendor support chat

The idea: a chatbot that answers vendor questions so the manager doesn't have to.

Why it's not built: vendors who are confused don't want to talk to a bot. They want to text the manager, and they want a quick, personal answer. I'd rather give the manager a tool that makes answering five minutes of texts feel like thirty seconds (canned responses, smart suggestions, one-tap replies) than pretend a chatbot is going to replace that relationship. The relationship is actually the product.

7. Automatic message composition

The idea: draft this week's "Saturday bulletin" to vendors based on the weather, the schedule, and recent events. Manager reviews and sends.

Why it's not built: drafting the message isn't the hard part. Deciding what to say is. The manager already knows what to say. What they need is a button that sends the thing they already wrote to everyone who's actually attending this Saturday, with delivery confirmation. That's a workflow feature, not a writing feature.

The principle behind the list

Every one of the seven ideas above is plausible. A couple are probably even genuinely good ideas. But plausibility is not the same thing as "a customer has a specific problem this would solve, that nothing simpler would solve better." When I sort my roadmap by the question "what is the most load-bearing thing I could ship next," the honest answer is almost never "a model."

It's usually a report. Or a better default. Or a fix for the thing that broke last Saturday morning. Or, most often, the feature a market manager mentioned in passing two weeks ago, in a tone that suggested she had long since given up hope of ever having it.

I'm building software for people who run farmers markets. They don't need me to be impressive. They need me to solve their actual problems. Sometimes the solution looks like AI. Most of the time it looks like a better data model, a calmer workflow, a clearer report, and a Saturday morning that goes smoother than the last one.

If you have a real problem that AI might actually solve

I'm not against building more AI into VIBEPro. I'm just not going to build it because the industry says I should. I'll build it when a market manager tells me, in their own words: "Here is the thing that's driving me crazy. I've tried everything else. I think a model might actually be what solves this." That's a conversation I want to have. It's just a very different conversation from "what's your AI story."

If you run a market and you have that problem, the one you think AI could solve, tell me about it. If AI turns out to be the right tool, we'll build it. If a better report or a smarter default or a cleaner workflow solves it instead, we'll build that. Either way, you'll have gotten the right thing. Not the thing with the sparkle icon.

Get started

Ready to make your market run smoother?

Tell us about your market, how many vendors, how often you run, what's working and what isn't. We'll show you how VIBEPro fits. No pressure, no hard sell.

Talk to us about your market →